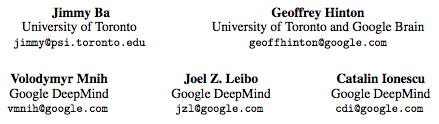

Using Fast Weights to Attend to the Recent Past

发布时间:2019-01-09

Until recently, research on artificial neural networks was largely restricted to systems with only two types of variable: Neural activities that represent the current or recent input and weights that learn to capture regularities among inputs, outputs and payoffs.

There is no good reason for this restriction. Synapses have dynamics at many different time-scales and this suggests that artificial neural networks might benefit from variables that change slower than activities but much faster than the standard weights. These “fast weights” can be used to store temporary memories of the recent past and they provide a neurally plausible way of implementing the type of attention to the past that has recently proved very helpful in sequence-to-sequence models. By using fast weights we can avoid the need to store copies of neural activity patterns.

热门文章

行业早报2019-01-15 nginx+php 开启PHP错误日志

nginx+php 开启PHP错误日志

行业早报2019-01-15 为什么你说了很多遍,对方还是不听? 2018-09-25

为什么你说了很多遍,对方还是不听? 2018-09-25

行业早报2019-01-15 【Ruby on Rails实战】3.1 宠物之家论坛管理系统介绍

【Ruby on Rails实战】3.1 宠物之家论坛管理系统介绍

行业早报2019-01-15 从凡人到筑基期的单片机学习之路

从凡人到筑基期的单片机学习之路

行业早报2019-01-15 jmeter单台大数量并发

jmeter单台大数量并发

行业早报2019-01-15 Go在Windows下开发环境搭建

Go在Windows下开发环境搭建

行业早报2019-01-15 ES-科普知识篇

ES-科普知识篇

行业早报2019-01-15 Hbase 之 由 Zookeeper Session Expired 引发的 HBASE 思考

Hbase 之 由 Zookeeper Session Expired 引发的 HBASE 思考

行业早报2019-01-15 谷歌大脑专家详解:深度学习可以促成哪些产品突破?

谷歌大脑专家详解:深度学习可以促成哪些产品突破?

行业早报2019-01-15 EventLoop

EventLoop

相关推荐

实名认证

实名认证

未实名

未实名